Beste jonge developer,

Deze brief is gericht aan jou, de nieuwe generatie developer, en hoopt je een hart onder de riem te geven en welgemeend advies hoe te floreren in deze wonderlijke tijd.

Deze brief is gericht aan jou, de nieuwe generatie developer, en hoopt je een hart onder de riem te geven en welgemeend advies hoe te floreren in deze wonderlijke tijd.

Het Nationaal Cyber Security Centrum waarschuwt voor een verhoogd risico op datalekken met de komst van nog krachtigere datamodellen. Zorg dus dat je hierop voorbereid bent.

Ook bij coderen van behulp van AI komt onze ervaring met robuuste, betrouwbare software heel goed van pas.

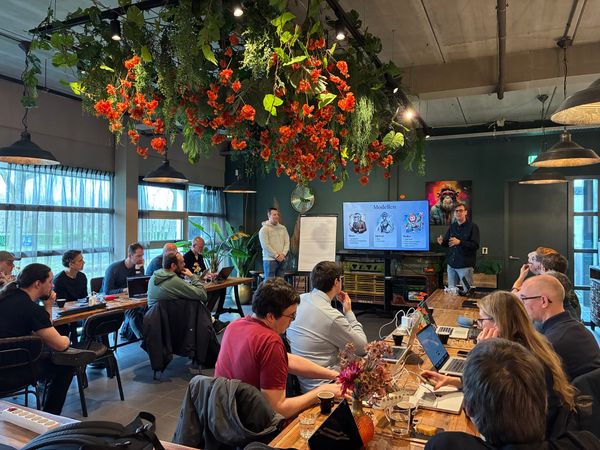

De veranderingen op het gebied van AI zijn bijna niet bij te houden. Daarnaast gaat onze collectieve kennis wekelijks met sprongen vooruit. Daarom was het alweer tijd voor een kleine benchmark meeting om te zien hoe de verschillende collega's de tools inzetten en wat we daarvan kunnen leren.

Processen die voorheen maanden tijd kostten, zijn nu in een kwestie van dagen voltooid. Maar het blijft belangrijk te weten wat je doet, en wanneer je hulp van een professional moet inschakelen.

Leerlingen zullen altijd de randjes van het toelaatbare opzoeken, de kunst is om AI in te zetten om ze ook echt verder te helpen. Maar welke tools kun je daar nou voor inzetten? In deze blogpost twee goeie voorbeelden van behulpzame tools.

Het was weer tijd voor onze best practice meeting over Claude Code - wat hebben we geleerd en ontdekt waar onze collega's ook van kunnen leren?

Terwijl onze developers allemaal druk aan het experimenteren zijn met Claude Code, gebruiken Eric en ik vooral Claude Cowork. Zelfde programma, toegankelijker voor niet IT-ers, en bizar goed in het vergemakkelijken van je leven.

Op onze 3de Claude Code benchmark middag kwamen weer een hoop leuke en leerzame onderdelen aan bod.

Het lijkt wel of er elk jaar weer een wet bijkomt die ondernemers verplicht een hoop geld uit te geven. Wat is NIS2 nu precies en hoe kunnen we het behapbaar houden?

Wij zien dat toch anders: we herbouwen niet Confluence, maar we maken een product dat perfect geschikt is voor management systemen (zoals bv een ISMS). Waarom? Ok, dat zit zo... Een stukje geschiedenis Wij zijn al heel lang trouw gebruiker van Confluence. Uit het intensieve gebruik van de Atlassian-producten

Een onderdompeling in de wondere wereld van Claude Code om zo samen de mogelijkheden en uitdagingen te ontdekken.